The process of speech production and perception in Human Beings - PowerPoint PPT Presentation

Title: The process of speech production and perception in Human Beings

1

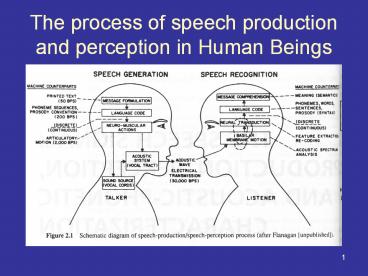

The process of speech production and perception

in Human Beings

2

Speech Generation

- The production process (generation) begins when

the talker formulates a message in his mind which

he wants to transmit to the listener via speech. - In case of machine

- First step message formation in terms of printed

text. - Next step conversion of the message into a

language code.

3

Speech Generation

- After the language code is chosen the talker must

execute a series of neuromuscular commands to

cause the vocal cord to vibrate such that the

proper sequence of speech sounds is created. - The neuromuscular commands must simultaneously

control the movement of lips, jaw, tongue, and

velum.

4

Speech Perception

- The speech signal is generated and propagated to

the listener, the speech perception (recognition)

process begins. - First the listener processes the acoustic signal

along the basilar membrane in the inner ear,

which provides running spectral analysis of the

incoming signal.

5

Sound perception

- The audible frequency range for human is apprx

20Hz to 20KHz - The three distinct parts of the ear are outer

ear, middle ear and inner ear - Outer ear

- Outer ear includes pinna and auditory canal

- It helps to direct an incident sound wave into

middle ear - Filters and modifies the captured sound

6

(No Transcript)

7

(No Transcript)

8

- Outer ear

- The perceived sound is sensitive to the pinnas

shape - By changing the pinnas shape the sound quality

alters as well as background noise - After passing through ear cannal sound wave

strikes the eardrum which is part of middle ear

9

- Middle ear

- Ear drum

- This oscillates with the frequency as that of the

sound wave - Movements of this membrane are then transmitted

through the system of small bones called as

ossicular system - From ossicular system to cochlea

- Cochlea achieves efficient form of impedance

- matching

10

- Inner ear

- It consist of two membranes Reissners membrane

and basilar membrane - When vibrations enter cochlea they stimulate 20

000 to 30 000 stiff hairs on the basilar membrane - These hair in turn vibrate and generate

electrical signal that travel to the brain and

become sound

11

Pinna

Auditory cannal

Tympanic membrane

ossicular system

cochlea

Basilar membrane

12

Speech Perception

- A neural transduction process converts the

spectral signal into activity signals on the

auditory nerve. - The neural activity is converted into a language

code in the brain. - Finally the message comprehension (understanding

of meaning) is achieved.

13

Any coding technique could be used to transmit

the acoustic waveform from the talker to the

listener.

14

The Speech-Production Process

Vocal tract

Lips (end of the vocal cords)

Opening of vocal cords

(Longitudinal cross-section)

15

The Speech-Production Process

- Vocal tract consist of

- Pharynx the connection from the esophagus to the

mouth - Mouth or oral cavity

- The total length is about 17 cm

- The cross-sectional area determined by the

positions of the tongue, lips, jaw, and velum

varies from zero (complete closure) to about 20

cm2 - The nasal tract begins at the velum and ends at

the nostrils.

16

The Speech-Production Process

- Velum a trap-door like mechanism at the back of

the mouth cavity. - When the velum is lowered, the nasal tract is

acoustically coupled to the vocal tract to

produce nasal sounds of speech.

17

The Speech-Production Process

18

The Speech-Production Process

19

The Speech-Production Process

- The lungs and the associated muscles excites the

vocal mechanism. - The muscle force pushes air out of the lungs and

through the bronchi and trachea. - When the vocal cord is tensed, the air flow

causes them to vibrate, produces so called

voice-speech sounds. - When the vocal cord is relaxed a sound is

produced. - Speech is produced as a sequence of sounds.

20

Representing Speech In The Time and Frequency

Domains

The speech signal is slowly time varying signal

21

Representing Speech In The Time and Frequency

Domains

- Depending upon the state of the vocal cords the

events in speech are classified - Silence (S) where no speech is produced

- Unvoiced (U) in which the vocal cords are not

vibrating, so the resulting speech waveform is

aperiodic or random in nature - Voiced (V)in which the vocal cords are tensed

and therefore vibrate periodically when the air

flows from the lungs, so the resulting speech

waveform is quasi periodic (the pulses are not

strictly periodic, but vary slightly from cycle

to cycle).

22

Representing Speech In The Time and Frequency

Domains

- Spectral representation An alternative way of

characterizing the speech signal and representing

the information associated with the sounds. - Sound Spectrogram (most popular representation)

a three dimensional representation of speech

intensity, in different frequency bands, over

time is portrayed.

23

Representing Speech In The Time and Frequency

Domains

Using broad bandwidth analysis filter The

spectral envelope during voiced sections are seen

as vertical striations

Using narrow analysis filter Voiced sections are

seen as horizontal lines.

Vocal tract tube of varying cross sectional

area. So we can apply theory of resonance. Such

resonances are called formants for speech

Formants preferred resonating frequency

24

Wideband spectrogram

- Spectral analysis on 15 msec sections of

waveforms using broad analysis filter of 125Hz

bandwidth

25

Speech Sounds and Features

Front i(IY) I(IH) e(EH) æ(AE)

26

Phonetics

- Phonetics (from the Greek word f???, phone

sound/voice) is the study of sounds (voice). It

is concerned with the actual properties of speech

sounds (phones) as well as those of non-speech

sounds, and their production, audition and

perception

27

- Phonetics has three main branches

- articulatory phonetics, concerned with the

positions and movements of the lips, tongue,

vocal tract and folds and other speech organs in

producing speech - acoustic phonetics, concerned with the properties

of the sound waves and how they are received by

the inner ear - auditory phonetics, concerned with speech

perception, principally how the brain forms

perceptual representations of the input it

receives.

28

- Phoneme (linguistics) one of a small set of

speech sounds that are distinguished by the

speakers of a particular language - Syllable A unit of spoken language larger than

a phoneme

29

Speech Sounds and Features

- The vowels

- The vowel sounds are interesting class of sounds

in English. - The practical speech-recognition systems rely

heavily on vowel recognition to achieve high

performance. - If we omit the vowel letters in the sentence then

resulting text is easy to decode. - But if we omit consonant letters the resulting

text is not decodable.

30

Speech Sounds and Features

They noted significant improvements in the

companys image, supervision, their working

conditions, benefits and opportunities for growth

31

Speech Sounds and Features

- In speaking, vowels are produced by exciting an

essentially fixed vocal tract shape with

quasi-periodic pulses of air caused by the

vibration of the vocal cords. - The way in which cross sectional area varies

along the vocal tract determines the resonance

frequencies of the tract and thereby the sound is

produced.

32

Speech Sounds and Features

- The vowel sound produced is determined by the

position of the tongue. - The positions of the jaw, lips, and to a small

extent, the velum, also influence the result of

the sound.

33

Speech Sounds and Features

- The vowels are long in duration compare to the

consonant sound. - They are spectrally well defined.

- Easily and reliably recognized, both by machine

and by humans.

34

Speech Sounds and Features

A convenient and simplified way of classifying

vowel articulatory configuration is in terms of

tongue hump position (front, mid, back) and

tongue hump height (high, mid, low)

35

Speech Sounds and Features

The concept of a typical vowel sound is

unreasonable in light of the variability of vowel

pronunciation among men, women and children with

different regional accents and other variable

characteristics.

36

Speech Sounds and Features

37

Speech Sounds and Features

- It is not just a simple matter of measuring

formant frequencies or spectral peaks accurately

to accurately classify vowels sounds one must do

some type of talker (accent) normalization to

account for the variability in formants and

overlap between vowels.

38

b but a cat, anger u ado, about d door ã cast,

grass ú up, brother f fall aa arm, calm û book,

put g good aw out, now h happy e bet,

egg j jug eh air, wear k cut ee sleep,

each l list ey day, rain m moon eu coiffeur

n near i tip, inch p part I eye,

fry ch rich r rest o organ, law sh shut s soft ó

cot, orange th theme t turn oo too,

food dh the v village ow toad, own zh confusion w

wet ów cold, whole ng sing y yet oy boy,

boil xh Bach z zoom

39

Fricatives

- Fricatives (or spirants) are consonants produced

by forcing air through a narrow channel made by

placing two articulators close together. These

are the lower lip against the upper teeth in the

case of f , or the back of the tongue against

the soft palate in the case of German x ,

40

Diphthong

- In phonetics, a diphthong (Greek d?f??????,

"diphthongos", literally "with two sounds") is a

vowel combination involving a quick but smooth

movement from one vowel to another, - Often interpreted by listeners as a single vowel

sound or phoneme. - While "pure" vowels, or monophthongs, are said to

have one target tongue position, diphthongs have

two target tongue positions.

41

- Pure vowels are represented in the International

Phonetic Alphabet by one symbol English "sum" as

s?m, for example. - Diphthongs are represented by two symbols, for

example English "same" as se?m, where the two

vowel symbols are intended to represent

approximately the beginning and ending tongue

positions.

42

- Diphthongs in the British English

- ?? as in hope

- a? as in house

- a? as in kite

- e? as in same

- ju? as in few

- ?? as in join

- ?? as in fear

- ?? as in hair

- ?? as in poor

43

Speech Sounds and Features

- Diphthongs

- There are 6 diphthongs in American English

- /ay/(as in buy), /aw/ (as in down), /ey/ (as in

bait), and /?y/ (as in boy), /o/ (as in boat),

and /ju/ (as in you). - The diphthongs are produced by varying the vocal

tract smoothly between vowel configurations

appropriate to the diphthong.

44

Speech Sounds and Features

- Semivowels (A vowel-like sound that serves as a

consonant) - The group of sounds consisting of /w/, /l/, /r/,

and /y/ is quite difficult to characterize.

These sounds are called semivowels because of

their vowel-like nature. - Similar nature to the vowels and diphthongs

- Ex. In how w sounds near to oo in boot

45

- The English word "well" would sound the same if

it were spelled "uell" or "ooell". - Things must be considered in the opposite

manner the fact that spellings "uell" or "ooell"

might be also pronounced as /w/ doesnt mean that

/w/ is a vowel as in boot. The graphical form has

nothing to do with phonetics, so let it aside and

youll find that /w/ is a consonant, and /u()/ a

vowel

46

Speech Sounds and Features

- Nasal Consonants

- The nasal consonants /m/, /n/, /?/ are produced

with glottal excitation and the vocal tract

totally constricted at some point along the oral

passageway - The velum is lowered so that air flows through

the nasal tract, with noise being radiated at the

nostrils.

47

- The oral cavity, although constricted toward

front, is still acoustically coupled to the

pharynx. - The mouth serves as a resonant cavity that traps

acoustic energy at certain natural frequencies.

48

Voiced consonant

- A voiced consonant is a sound made as the vocal

cords vibrate, as opposed to a voiceless

consonant, where the vocal cords are relaxed. See

phonation for a continuum of degrees of tension

in the vocal cords. - Examples of voiced-voiceless pairs of consonants

are - Voiced Voiceless

- b p

- d t

- g k

49

Voiced consonant

- If you place your fingers on your voice box ,

you can feel a buzz when you pronounce zzzz, but

not when you pronounce ssss. That buzz is the

vibration of your vocal cords. Except for this,

the sounds s and z are practically identical,

with the same use of tongue and lips

50

Unvoiced consonant

- In phonetics, a voiceless consonant is a

consonant that doesn't have voicing. That is, it

is produced without vibration of the vocal cords.

- Voiceless consonants are usually articulated more

strongly than their voiced counterparts, because

in voiced consonants, the energy used in

pronunciation is split between the laryngeal

vibration and the oral articulation. - Ex. peculiar and particular.

51

Speech Sounds and Features

- Voiced Fricatives

- The voice fricatives /v/, /th/, /z/, and /zh/.

- The unvoiced fricatives /f/, /?/, /s/, and /sh/,

respectively - For voice fricatives the vocal cords are

vibrating - Since the vocal tract is constricted (narrow) at

some point forward of the glottis, the air flow

becomes turbulent in the neighborhood of the

constriction

52

Speech Sounds and Features

- Voiced Stops

- The voiced stop consonants /b/, /d/, and /g/, are

transient, noncontinuant sounds produced by

building up pressure behind the total

constriction somewhere in the oral tract and then

suddenly reducing the pressure. - For /b/ the constriction is at lips for /d/ it

is at the back of the teeth, and for /g/ it is

near the velum. - Unvoiced stop

- The unvoiced stop consonants /p/, /t/, and /k/.

53

Approaches to Automatic Speech Recognition by

Machine

- Three approaches

- The acoustic-phonetic approach.

- The pattern recognition approach.

- The artificial intelligence apporach

54

Approaches to Automatic Speech Recognition by

Machine

- The smallest meaningful unit (linguistically

distinct) in speech is called a phoneme, which

does not have any meaning alone, but it makes

possible to discriminate between different words. - Speech signals are sequences of linguistically

separable units (phonemes). - Phonemes transitions are mostly relatively

smooth. - A phone signifies the physical sound that is

produced when a phoneme is uttered. - One phoneme can be pronounced in different ways,

therefore a phone group containing similar

variants of a single phoneme is called an

allphone.

55

Approaches to Automatic Speech Recognition by

Machine

- The acoustic phonetic approach is based on the

theory of acoustic phonetics - First step segmentation and labeling

- Second step to determine a valid word

56

Approaches to Automatic Speech Recognition by

Machine

- The pattern-recognition approach to speech

recognition is basically one in which the speech

patterns are used directly without explicit

feature determination and segmentation - Step one training of speech patterns

- Step two recognition of pattern via pattern

comparison - Speech knowledge is brought into the system via

the training procedure

57

Approaches to Automatic Speech Recognition by

Machine

- The pattern recognition is the method of choice

for speech recognition for three reasons - Simplicity of use. The method is easy to

understand, it is rich in mathematical and

communication theory justification for individual

procedures used in training and decoding, and it

is widely used and understood. - Robustness and invariance to different speech

vocabularies, users, feature sets, pattern

comparison algorithms and decision rules. - Proven high performance.

58

Approaches to Automatic Speech Recognition by

Machine

- The AI approach is a hybrid of the

acoustic-phonetic approach and the pattern

recognition approach - The AI approach attempts to mechanize the

recognition procedure according to the way a

person applies its intelligence in visualizing ,

analyzing and finally making a decision on a

measured acoustic features.

59

Acoustic-phonetic Approach to Speech Recognition

Provides spectral representation

60

Acoustic-phonetic Approach to Speech Recognition

- Speech Analysis System (common to all approaches

to speech recognition) Provides an appropriate

representation of the characteristics of the time

varying speech signal. Technique used is linear

predictive coding (LPC) method. - Feature detection stage Convert the spectral

measurements to a set of features that describe

the acoustic properties of the different phonetic

units. - Features

- Nasality presence or absence of nasal resonance

- Friction presence or absence of random

excitation in the speech - Formant locations frequencies of the first three

resonances - Voiced and unvoiced classification periodic and

aperiodic excitation

61

Acoustic-phonetic Approach to Speech Recognition

- 3. The segmentation and labeling phase The

system tries to find stable regions and then to

label the segmented region according to how well

the features within that region match those of

individual phonetic units. - 4. This stage is the heart of the

acoustic-phonetics recognizer and is the most

difficult one to carry out reliably. - So various control strategies are used to limit

the range of segmentation points and label

possibilities. - The final output of the recognizer is the word or

word sequence, in some well-defined sense.

62

Speech Sound Classifier

63

Acoustic Phonetic Vowel Classifier

64

Acoustic Phonetic Vowel Classifier

- Three features have been detected over the

segment, first formant, F1, second formant, F2,

and duration of the segment, D. - The first test separates vowels with low F1from

vowels with high F1. - Each of these subsets can be split further on the

basis of F2 measurement with high F2 and low F2. - The third test is based on segment duration,

which separates tense vowels (large value of D)

from lax vowels (small values of D). - Finally, a finer test on formant values separates

the remaining unresolved vowels, resolving the

vowels into flat vowels and plain vowels

65

Problems In Acoustic Phonetic Approach

- The method requires extensive knowledge of the

acoustic properties of phonetic units. - For most systems the choice of features is based

on intuition and is not optimal in a well defined

and meaningful sense. - The design of sound classifiers is also not

optimal. - No well-defined, automatic procedure exists for

tuning the method on real, labeled speech.

66

Statistical Pattern-Recognition Approach to

Speech Recognition

67

Statistical Pattern-Recognition Approach to

Speech Recognition

- Feature measurement in which sequence of

measurement is made on the input signal to define

test pattern. The feature measurements are

usually the o/p of spectral analysis technique,

such as filter bank analyzer, a LPC, or a DFT

analysis. - Pattern training creates a reference pattern

(for different sound class) called as Template. - Pattern classification unknown test pattern is

compared with each (sound) class reference

pattern and a measure of distance between the

test pattern and each reference pattern is

computed. - Decision logic the reference pattern similarity

(distance) scores are used to decide which

reference pattern best matches the unknown test

pattern.

68

The strengths and weakness of the

Pattern-Recognition model

- The performance of the system is sensitive to the

amount of training data available for creating

sound class reference pattern.( more training,

higher performance) - The reference patterns are sensitive to the

speaking environment and transmission

characteristics of the medium used to create the

speech. (because speech spectral characteristics

are affected by transmission and background

noise) - The method is relatively insensitive syntax and

semantics.

69

The strengths and weakness of the

Pattern-Recognition model

- The system is insensitive to the sound class. So

the techniques are applied to wide range of

speech sounds (phrases). - It is easy to incorporate the syntactic and

semantic constraints directly into the

pattern-recognition structure, thereby improving

recognition accuracy and reducing computation.

70

AI Approaches To Speech Recognition

- The basic idea of AI is to compile and

incorporate the knowledge from variety of

knowledge sources to solve the problem. - Acoustic Knowledge Knowledge related to sound or

sense of hearing - Lexical Knowledge Knowledge of the words of the

language. (decomposing words into sounds) - Syntactic Knowledge Knowledge of syntax

- Semantic Knowledge Knowledge of the meaning of

the language. - Pragmatic Knowledge (sense derived from meaning)

inference ability necessary in resolving

ambiguity of meaning based on ways in which words

are generally used.

71

AI Approaches To Speech Recognition

- Go to the refrigerator and get me a

book . syntactically correct but semantically

inconsistent. - The bears killed the rams. can be interpreted

in two pragmatically different ways (jungle /

football) - Power plants colorless happily old. Syntacticall

y unacceptable and semantically meaningless - Good ideas often run when least

expected. Semantically inconsistent. (corrected

by replacing run by come)

72

AI Approaches To Speech Recognition

- Several ways to integrate knowledge sources

within a speech recognizer. - 1 Bottom-Up

- 2 Top-Down

- 3 Black Board

73

AI Approaches To Speech Recognition

- Bottom-Up Approach The lowest level processes

(feature detection, phonetic decoding) precede

higher level processes (lexical coding) in a

sequential manner

74

AI Approaches To Speech Recognition

- Top-Up Approach in this the language model

generate word hypotheses that are matched against

the speech signal, and syntactically and

semantically meaningful sentences are built up on

the basis of word match scores.

75

AI Approaches To Speech Recognition

76

AI Approaches To Speech Recognition

- Black Board Approach All knowledge sources

(KS) are considered independent