Chapter 6: PowerPoint PPT Presentation

Title: Chapter 6:

1

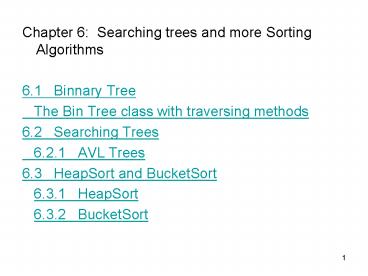

- Chapter 6 Searching trees and more Sorting

Algorithms - 6.1 Binnary Tree

- The Bin Tree class with traversing methods

- 6.2 Searching Trees

- 6.2.1 AVL Trees

- 6.3 HeapSort and BucketSort

- 6.3.1 HeapSort

- 6.3.2 BucketSort

2

6.1 Binary trees (Continuation)

- Characteristics

- Maximal height of a binary tree with n nodes is

n-1. - (this is when each internal node has exactly

one child, this is in fact a linear linked list.)

- Minimal number of nodes in a binary tree of

height h is h1. - (Ditto)

3

- Maximal number of nodes in a binary tree having

higth h N(h) 2h1 - 1 Knoten. - proof by Induction.

- Minimal higth of a binary tree having n b nodes

- O(log n) more precisely log2 (n1)

- 1. - Justification be h the minimal height of a

binary tree with n nodes. then 2 h - 1

N(h -1) lt n ? N(h) 2 h1 - 1 - thus

- 2 h lt n 1 ? 2 h 1

- thus

- h lt log2 (n1) ? h1

4

- Theorem In a nonempty binary tree T, whose

internal nodes have each exactly two children,

the following holds (Leaves(T))

(internal nodes(T)) 1. - Proof by Induction over the size of the tree T.

- Base case Be T a tree wit one node. Then

- (leaves(T)) 1, (internal nodes(T)) 0. This

BC. OK. - Induction Be T a tree with more than a node.

Then the root is an internal node. Let T1 and T2

be the right and left sub-trees. According to the

induction assumption (leaves(Ti)) (internal

nodes(Ti)) 1 for i1,2. we obtain - (leaves(T)) (leaves(T1)) (leaves(T2))

- (internal nodes(T1)) 1 (internal

nodes(T2)) 1 - (internal nodes(T)) 1.

5

ADT-Specification (BinTree)

- algebra BinTree

- sorts BinTree, El, boolean

- ops

- emptyTree ? BinTree

- isEmpty BinTree ? boolean

- isLeaf BinTree ? boolean

- makeTree ? BinTree x El x BinTree ? BinTree

- rootEl BinTree ? El

- leftTree, rightTree BinTree ? BinTree

- sets

- BinTree ltgt ltL,x,Rgt L,R BinTree, x El

- functions

- emptyTree() ltgt

- makeTree(L,x,R) ltL,x,Rgt

- rootEl(lt_,x,_gt) x

- ...

- end BinTree.

6

Traversing methods for binary trees

- ops

- inOrder, preOrder, postOrder BinTree ? List

- functions

- inOrder(ltgt) ltgt

- preOrder(ltgt) ltgt

- postOrder(ltgt) ltgt (leere Liste)

- inOrder(ltL,x,Rgt) inOrder(L) ltxgt inOrder(R)

- preOrder(ltL,x,Rgt) ltxgt preOrder(L)

preOrder(R) - postOrder(ltL,x,Rgt) postOrder(L) postOrder(R)

ltxgt - Where "" describes list concatenation

7

Example binary tree for the expression

((12/4)2)

- inOrder 12, /, 4, , 2

- preOrder , /, 12, 4, 2

- postOrder 12, 4, /, 2,

8

- Implementation in Java

- public class BinTree

- private Object val

- private BinTree right

- private BinTree left

- // Constructors

- BinTree(Object x)

- val x left right null

- BinTree(Object x)

- val x left right null

- BinTree(Object x, BinTree LTree, BinTree RTree)

- val x left LTree right RTree

9

- // Basic methods (accordirg to signature)

- public boolean isLeaf()

- return ( this.left null this.right

null ) - public Object nodeVal() // according.

"rootEl" - return this.val

- public void setNodeVal(Object x)

- this.val x

- public BinTree leftTree()

- return this.left

- public BinTree rightTree()

- return this.right

- public static boolean isEmpty(BinTree T)

- return ( T null )

10

- // traversing

- public static LiLiS preOrder(BinTree T) if (

isEmpty(T) ) return LiLiS.emptyList()

else return conc3(list1(T.nodeVal()),

preOrder(T.leftTree()),

preOrder(T.rightTree()) )

- Etc.

- // helping (internal) methods for LiLiS-Objekte

- private static LiLiS conc3(LiLiS L1, LiLiS L2,

LiLiS L3) - return LiLiS.concat(LiLiS.concat(L1,L2),L3)

- private static LiLiS list1(Object el)

- PCell Cel new PCell(el)

- return new LiLiS(Cel)

11

Array-representation

- Beside an implementation with pointers we can

represent a tree directly in an array - For left-complete binary trees node content in

the following order in the array levels from up

to down an inside each level frpm left to right. - Nodes with index i

- Successor to the right Index 2 i.

- Successor to the left Index 2 i 1.

- parent Index i div 2.

12

Example for array representation

- Note we can also use this for not complete

trees, but in thst case we will have emply places

in the array.

13

Generalization for not binary trees

- A possible definition

- Definition a treeT is a tuple

- T ( x, T1 , ... ,Tk ), where x is a valid

content for the node and and Ti are trees. - Here k 0 is also valid. The corresponding

trivial tree is composed by only one node. - (However with this approach belongs a null not

to the set of trees!)

14

Descriptions and Characteristics

- Grade of a node number of children.

- Grade of a tree T

- grade(T) max grade(k) k nodes in T

- The maximal number of elements in a tree of

height h and grade d is N(h) (d h1 - 1) / (d

- 1).

15

Implementation

- With an array of pointers to the children (only

when the grade is bounded and the number of

children is not too high). - Through a pointer to a list of binary trees a

node has a pointer to the leftmost child and to

the sibling next to the right, for example. - class TreeNode

- private Object val

- private TreeNode leftmostChild

- private TreeNode rightSibling

- ...

16

6.2 Search trees

- Dictionary-Operations

- member

- insert

- delete

- Goal implement these efficiently

17

For comparing

Ordered list (pointer) Non-ordered list (pointer) Ordered list as array

insert O(n) O(1) O(n)

delete O(n) O(n) O(n)

member O(n) O(n) O(log n)

- member in ordered list as array binary search

18

- Definition

- Be T a binary tree.

- N(T) describes the set of nodes of T.

- A mapping N(T) ? D is said to be a node marking

function, where D a range is of complete ordered

values. - A binary tree T with node marking m is called a

search tree when for each sub-tree - T ' ( L, x, R ) in T the following holds

- y from L ? m(y) lt m(x)

- y from R ? m(y) gt m(x)

- Note all marks are different (Dictionary!).

19

Implementation

- We derive the BinSearchTree class from the

BinTree class. - Extension (from the BinTree) marking funktion

- numVal BinSearchTree ? int

- Dictionary-Operations to be implemented

- ops

- member El x BinSearchTree ? boolean insert

El x BinSearchTree ? BinSearchTree delete El X

BinSearchTree ? BinSearchTree

20

Insert

- Algorithm insert(object x, tree T)

- if T empty then

- makeTree(empty,x,empty return

- if ( m(x) lt m(root of T) ) then

- insert(x, left subtree of T)

- else

- insert(x, left subtree of T)

21

Member

- algorithm member(Object x, treeT)

- if T emply return false

- int k m(x)

- int k m(root of T)

- if k k return true

- if k lt k

- return member(x, left sub-tree of T)

- sonst

- return member(x, right sub-tree ofT)

22

Delete

- delete an element

- (may imply restructuring the tree.)

- 1. Search for the sub-tree T ', whose root has

the element to be deleted. - 2. If T ' is a leaf, replace T ' in T by null.

- 3. If T ' has only one sub-tree ( T '' ), replace

T ' by T ''. - 4. Else, delete the smallest element (min) from

the sub-tree at the right of T ' (note min has

at most one sub-tree) and put T '.val min.

(alternatively, delete the node with the biggest

element from the left tree of T ' max and put T '

.val max)

23

Complexity analysis

- Be n the number of nodes in the search tree.

- Costs of a complete traversal O(n)

- Costs for member, insert, delete

- not constant portion search for the right

position in the binary tree across the path

starting from the root - ? O(height of the tree)

24

- Best case complete tree

- height O(log n).

- Thus the complexity of the operations is only

- O(log n).

- Worst case lineal tree (results when inserting

the elements in order) - height n-1.

- complexity of the operations

- for each operation O(n),

- for the for the construction of the tree

through inserting the elements O(n²).

25

Complexity analysis (2)

- Average case

- Complexity of the operations are the order of

the average lenght of the path (average of all

paths in all searching trees having n nodes) - O(log n)

- (siehe Skriptum direkte Abschätzung oder

Berechnung über die Harmonische Reihe)

26

Gesucht Mittlere Astlänge in einem

durchschnittlichen Suchbaum. Dieser sei nur

durch Einfügungen entstanden. Einfügereihenfolge

Alle Permutationen der Menge der Schlüssel a1,

... , an gleichwahrscheinlich. Diese wollen wir

zunächst als sortiert annehmen. A(n) 1

mittlere Astlänge im Baum mit n Schlüsseln A(n)

mittlere Zahl von Knoten auf Pfad in Baum mit

n Schlüsseln. Sei ai1 das erste gewählte

Element. Dann steht dieses Element in der Wurzel.

Im linken Teilbaum finden sich i, im rechten

n-i-1 Elemente. Linker Teilbaum ist zufälliger

Baum mit den Schlüsseln a1 bis ai , rechter mit

den Schlüsseln ai2, ..., an. Die mittlere Zahl

von Knoten auf einem Pfad in diesem Baum ist

daher i/n ( A(i) 1) (n-i-1)/n

(A(n-i-1) 1) 1(1/n).

27

Dabei sind die Beiträge der Teilbäume um eins

vergrößert (für die Wurzel) und mit den

entsprechenden Gewichten belegt. Der letzte Term

betrifft den Anteil der Wurzel. Schließlich muss

über alle möglichen Wahlen von i mit 0 i lt n

gemittelt werden. So erhalten wir A(n) n-2

? 0 i lt n i (A(i)1) (n-i-1)(A(n-i-1)1)

1 Aus Symmetriegründen ist der Anteil der

beiden Terme A(i) und A(n-i-1) gleich, die

konstanten Teile summieren sich zu n und

2(n-1)n/2 auf. Somit folgt A(n) n-2( 2

?0iltn i A(i) (n-1)n n) 1 2n-2?0iltn i

A(i) Wir führen die Abkürzung S(n) ?0iltn i

A(i) ein und erhalten A(n) 1 2n-2

S(n-1) und S(n) - S(n-1) n A(n) n

2 S(n-1) / n , also die Rekursionsformel

S(n) n S(n-1) (n2) / n.

28

Rekursionsformel S(n) n S(n-1) (n2) /

n Außerdem ist S(0) 0 und S(1) A(1) 1.

Nun wollen wir durch Induktion folgende

Ungleichung beweisen S(n) n (n1) ln

(n1) Sicherlich ist dies für n 0 und n 1

richtig. Einsetzen der Rekursionsformel für n-1

ergibt aber beim Schluss von n-1 nach n

S(n) n S(n-1) (n2) / n

n(n-1) (n2) ln n n(n1) ln

(n1) (n1)n (ln n - ln (n1)) - 2 ln n n

n(n1) ln (n1) - (n1) n / (n1)

- 2 ln n n lt n (n1) ln (n1)

Dabei haben wir ln n - ln (n1) -1/(n?),

0lt?lt1, verwendet. Dann folgt aber A(n) 1

2n-2 S(n-1) 1 2 ln n O( log n)

29

6.2.1 AVL-Trees (according to Adelson-Velskii

Landis, 1962)

- In normal search trees, the complexity of find,

insert and delete operations in search trees is

in the worst case ?(n). - Can be better! Idea Balanced trees.

- Definition An AVL-tree is a binary search tree

such that for each sub-tree T ' lt L, x, R gt

h(L) - h(R) ? 1 holds - (balanced sub-trees is a characteristic of

AVL-trees). - The balance factor or height is often annotated

at each node h(.)1.

30

Height(I) hight(D) lt 1

This is an AVL tree

31

This is NOT an AVL tree (node does not hold

the required condition)

32

Goals

- 1. How can the AVL-characteristics be kept when

inserting and deleting nodes? - 2. We will see that for AVL-trees the complexity

of the operations is in the worst case - O(height of the AVL-tree)

- O(log n)

33

Preservation of the AVL-characteristics

- After inserting and deleting nodes from a tree we

must procure that new tree preserves the

characteristics of an AVL-tree - Re-balancing.

- How ? simple and double rotations

34

Only 2 cases (an their mirrors)

- Lets analyze the case of insertion

- The new element is inserted at the right (left)

sub-tree of the right (left) child which was

already higher than the left (right) sub-tree by

1 - The new element is inserted at the left (right)

sub-tree of the right (left) child which was

already higher than the left (right) sub-tree by

1

35

Rotation (for the case when the right sub-tree

grows too high after an insertion)

Is transformed into

36

Double rotation (for the case that the right

sub-tree grows too high after an insertion at its

left sub-tree)

Double rotation

Is transformed into

37

b

First rotation

a

c

W

Z

x

y

new

a

Second rotation

b

W

c

x

new

y

Z

38

Re-balancing after insertion

- After an insertion the tree might be still

balanced or - theorem After an insertion we need only one

rotation of double-rotation at the first node

that got unbalanced in order to re-establish

the balance properties of the AVL tree. - ( on the way from the inserted node to the

root). - Because after a rotation or double rotation the

resulting tree will have the original size of the

tree!

39

The same applies for deleting

- Only 2 cases (an their mirrors)

- The element is deleted at the right (left)

sub-tree of which was already smaller than the

left (right) sub-tree by 1 - The new element is inserted at the left (right)

sub-tree of the right (left) child which was

already higher that the left (right) sub-tree by

1

40

The cases

Deleted node

1

1

1

41

Re-balancing after deleting

- After deleting a node the tree might be still

balanced or - Theorem after deleting we can restore the AVL

balance properties of the sub-tree having as root

the first node that got unbalanced with just

only one simple rotation or a double rotation. - ( on the way from the deleted note to the

root). - However the height of the resulting sub-tree

might be shortened by 1, this means more

rotations might be (recursively) necessary at the

parent nodes, which can affect up to the root of

the entire tree.

42

About Implementation

- While searching for unbalanced sub-tree after an

operation it is only necessary to check the

parents sub-tree only when the sons sub-tree

has changed it height. - In order make the checking for unbalanced

sub-trees more efficient, it is recommended to

put some more information on the nodes, for

example the height of the sub-tree or the

balance factor (height(left sub-tree)

height(right sub-tree)) This information must be

updated after each operation - It is necessary to have an operation that returns

the parent of a certain node (for example, by

adding a pointer to the parent).

43

Complexity analysis worst case

- Be h the height of the AVL-tree.

- Searching as in the normal binary search tree

O(h). - Insert the insertion is the same as the binary

search tree (O(h)) but we must add the cost of

one simple or double rotation, which is constant

also O(h). - delete delete as in the binary search tree(O(h))

but we must add the cost of (possibly) one

rotation at each node on the way from the deleted

node to the root, which is at most the height of

the tree O(h). - All operations are O(h).

44

Calculating the height of an AVL tree

- Be N(h) the minimal number of nodes

- In an AVL-tree having height h.

- N(0)1, N(1)2,

- N(h) 1 N(h-1) N(h-2) für h ? 2.

- N(3)4, N(4)7

- remember Fibonacci-numbers

- fibo(0)0, fibo(1)1,

- fibo(n) fibo(n-1) fibo(n-2)

- fib(3)1, fib(4)2, fib(5)3, fib(6)5, fib(7)8

- By calculating we can state

- N(h) fibo(h3) - 1

Principle of construction

0

1

2

3

45

- Be n the number of nodes of an AVL-tree of height

h. Then it holds that - n ? N(h) ,

- By making p (1 sqrt(5))/2 und q (1-

sqrt(5))/2 - we can now write

- n ? fibo(h3)-1

- ( ph3 qh3 ) / sqrt(5) 1

- ? ( p h3/sqrt(5)) 3/2,

- thus

- h3logp(1/sqrt(5)) ? logp(n3/2),

- thus there is a constant c with

- h ? logp(n) c

- logp(2) log2(n) c

- 1.44 log2(n) c O(log n).

46

6.3.1 Heapsort

- Idea two phases

- 1. Construction of the heap

- 2. Output of the heap

- For ordering number in an ascending sequence use

a Heap with reverse order the maximum number

should be at the root (not the minimum). - Heapsort is an in-situ-Procedure

47

Remembering Heaps change the definition

- Heap with reverse order

- For each node x and each successor y of x the

following holds m(x) ? m(y), - left-complete, which means the levels are filled

starting from the root and each level from left

to right, - Implementation in an array, where the nodes are

set in this order (from left to right) .

48

Second Phase

Heap

Heap

Ordered elements

Ordered

elements

- 2. Output of the heap

- take n-times the maximum (in the root,

deletemax) - and exchange it with the element at the end

of the heap. - ? Heap is reduced by one element and the

subsequence of ordered elements at the end of the

array grows one element longer. - cost O(n log n).

49

First Phase

- 1. Construction of the Heap

- simple method n-times insert

- Cost O(n log n).

- making it better consider the array a1 n

as an already left-complete binary tree and

let sink the elements in the following sequence ! - an div 2 a2 a1

- (The elements an an div 2 1 are

already at the leafs.)

HH The

leafs of the heap

50

- Formally heap segment

- an array segment a i..k ( 1 ? i ? k ltn ) is

said to be a heap segment when following holds - for all j from i,...,k

- m(a j ) ? m(a 2j ) if 2j ? k and

- m(a j ) ? m(a 2j1) if 2j1 ? k

- If ai1..n is already a heap segment we can

convert ain into a heap segment by letting

ai sink.

51

Cost calculation

- Be k log n1 - 1. (the height of the

complete portion of the heap) - cost

- For an element at level j from the root k j.

- alltogether ?j0,,k (k-j)2j

- 2k ?i0,,k i/2i 2 2k O(n).

52

advantage

- The new construction strategy is more efficient !

- Usage when only the m biggest elements are

required - 1. construction in O(n) steps.

- 2. output of the m biggest elements in

- O(mlog n) steps.

- total cost O( n mlog n).

53

Addendum Sorting with search trees

- Algorithm

- Construction of a search tree (e.g. AVL-tree)

with the elements to be sorted by n insert

opeartions. - Output of the elements in InOrder-sequence.

- ? Ordered sequence.

- cost 1. O(n log n) with AVL-trees,

- 2. O(n).

- in total O(n log n). optimal!