ETL Tools and Their Applications in Data Warehousing PowerPoint PPT Presentation

Title: ETL Tools and Their Applications in Data Warehousing

1

ETL Tools and Their Applications in Data

Warehousing

Maintaining a data warehouse isnt just about

running a database system. A lot more needs to be

taken care of. For instance, the way the

information goes into a data warehouse is

basically an entire mechanism in itself that

contributes to the data when it is in transit and

the types it must follow to become available.

This is where ETL tools fit in. ETL extract,

transform, load is the standard model under

which information is combined into a single

repository, data center, or warehouse for legacy

computing or insights from various systems

usually built and sponsored by separate

providers, divisions, or stakeholders. Extraction

is the mechanism by which data from multiple

types of data is collected. Transformation

includes converting the storage data into the

correct format for analysis and interpretation.

When the transformed data is entered into the

database server, storage device, data mart, or

warehouse, loading takes place. In general, ETL

prepares the data to make it relevant for study

and available. To be fast, reliable, elevated,

flexible, and stable, an automated best ETL tools

are developed. More specifically, it serves a

crucial role that should not be the sole burden

of supervising overstressed or under-trained IT

teams especially when there is too much depending

onto the data warehouse and the crucial

responses that the business seeks from it. The

truth is that no matter how experienced the IT

team might be, growing data demands can

continuously pose problems for every

organization, exhausting personnel, facilities,

and budgets, and losing precious resources just

to keep up with custom, manual

setups. Traditional vs Modern ETL Process

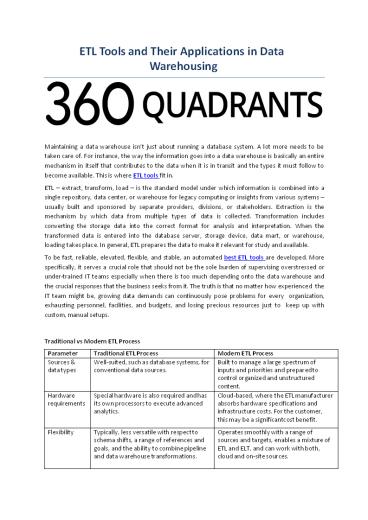

Parameter Traditional ETL Process Modern ETL Process

Sources data types Well-suited, such as database systems, for conventional data sources. Built to manage a large spectrum of inputs and priorities and prepared to control organized and unstructured content.

Hardware requirements Special hardware is also required and has its own processors to execute advanced analytics. Cloud-based, where the ETL manufacturer absorbs hardware specifications and infrastructure costs. For the customer, this may be a significantcost benefit.

Flexibility Typically, less versatile with respect to schema shifts, a range of references and goals, and the ability to combine pipeline and data warehouse transformations. Operates smoothly with a range of sources and targets, enables a mixture of ETL and ELT, and can work with both, cloud and on-site sources.

2

Real-time vs. batched Processes information in batches. Data is stored in batches or in real-time.

Security Security is simple, assuming users get the right support in place, along with all the components on site. The vendor provides security and privacy.

Types of ETL Tools Here's a glance at the

specific kinds of ETL tools and what they can do

for the business Batch processing techniques

Incumbent batch processing techniques combine the

information when there is lowerdemand for

computational power during off-hours. These

techniques prepare data without influencing

performance somewhere else for kinds of data

which are less reliant on speed (assume weekly

or annual computations, such as income or

compensation monitoring). Open source tools Like

almost all open source software, open source ETL

is perfect, easy to integrate with other

systems, and particularly attractive to

businesses with constrained development

expenditures. Users can rely on standards of

responsibility, adaptability, and the latest in

everything because of the collaborative nature of

open source implementation that may be lacking

in major aspects with other alternatives. Cloud-ba

sed tools Although batch processing is usually

the area of on-site database systems, the cloud

now provides new batch analysis techniques. They

deliver the very same advantages as those of old

legacy applications, but with the cloud benefits

of today, such asreal-time support, built-in

information security, and smart identification of

structure. Real-time tools Most businesses use a

vast number of modern applications these days

that require actual facts. Real-time ETL tools

use a totally different paradigm than the other

solutions, one based on distributed message

lists - decoupled or separate program

communication - and stream processing, or

ongoing streaming of data. The net result is that

businesses can quickly query and get responses,

and not only when it is efficient for the

system. Which ETL Tool is Right for You? Although

most, if not many of the above methods will serve

the organization well in acertain way, each is

built to better suit those requirements Incumbent

batch Best for companies who choose to use

on-site technology and/or current suppliers and

have far lowerfear about the production of

real-time results. Open source Suitable for

organizations that are familiar with open source

technology maintenance and service, or that

choose to create an ETL solution itself utilizing

leading open source technologies. Cloud-based

Best for companies that choose cloud-built and

delivered instruments and are involved in

keeping costs down by not needing to procure or

repair devices. Real-time Best for companies

needing a digital way to manage vast quantities

of data or information streaming, scale up or

down activities as required, and real-time

process incidents. Implementing the ETL process

in the data warehouse The ETL process has three

different steps Extract This phase involves the

retrieval of data into the staging area from its

root filesystem. Without compromising the output

of the root filesystem, any transformations can

be made in the staging

3

area. Even if users actually copy any compromised

data from the source into the data warehouse

folder, recovering it may be a problem. Until

transferring it into the data warehouse, users

can test collected data in the staging area. The

data warehouses can combine hardware, DBMS, OS,

and networking devices with applications.

Sources involve legacy applications such as

custom software, mainframe computers, and POC

devices such as call switches, ATM, text

documents, ERP, spreadsheet applications,

partner info, and suppliers. As a consequence,

before collecting data and manually loading it,

users will need a logical data chart. The chart

of the data reflects the relation between

sources and target output. Transform In its

original state, the data collected from the host

device is imperfect and not functional. Users

need to cleanse, label, and convert it because of

this. This is the most significant step in

enhancing and altering knowledge to produce

insightful BI reports through the ETL process. In

the second stage, a series of parameters are

added to the data that users have extracted. Hold

data or immediate move is considered fordata

that doesnot require any modification. Users can

also perform custom data processing. For

example, if a user needs overall sales revenue

that is not in the database, or if the first and

last name of a table is in different columns,

before uploading, they may be merged into the

same column. Load The last phase of the ETL

process entails data entry into the data

warehouse's database system. Significantvolumes

of data have to be loaded within a relatively

limited time period in a typical data warehouse.

As a result, for efficiency, the loading process

needs to be simplified. Users should customize

the recovery process to restart from the point of

failure without sacrificing data integrity if

there is any load failure. Admins should track,

restart, and cancel the load according to the

output of the server. About 360Quadrants 360Quadr

ants is the largest marketplace looking to

disrupt USD 3.7 trillion of technology spend and

is the only rating platform for vendors in the

technology space. The platform provides users

access to unbiased information that helps them

make qualified business decisions. The platform

facilitates deeper insights using direct

engagement with 650 industry experts and

analysts and allows buyers to discuss their

requirements with 7,500 vendors.Companies get to

win ideal new customers, customize their

quadrants, decide key parameters, and position

themselves strategically in niche spaces, to be

consumed by giants and start-ups alike. Experts

get to grow their brand and increase their

thought leadership. The platform targets the

building of a social network that linksindustry

experts with companies worldwide.