Dynamic control of sensor networks with inferential ecosystem models - PowerPoint PPT Presentation

Title:

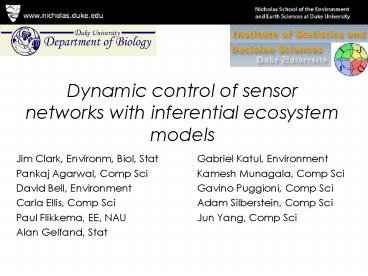

Dynamic control of sensor networks with inferential ecosystem models

Description:

Dynamic control of sensor networks with inferential ecosystem models Jim Clark, Environm, Biol, Stat Pankaj Agarwal, Comp Sci David Bell, Environment – PowerPoint PPT presentation

Number of Views:181

Avg rating:3.0/5.0

Title: Dynamic control of sensor networks with inferential ecosystem models

1

Dynamic control of sensor networks with

inferential ecosystem models

- Jim Clark, Environm, Biol, Stat

- Pankaj Agarwal, Comp Sci

- David Bell, Environment

- Carla Ellis, Comp Sci

- Paul Flikkema, EE, NAU

- Alan Gelfand, Stat

- Gabriel Katul, Environment

- Kamesh Munagala, Comp Sci

- Gavino Puggioni, Comp Sci

- Adam Silberstein, Comp Sci

- Jun Yang, Comp Sci

2

Motivation

- Understanding forest response to global change

(climate, CO2) - Forces at many scales

- Complex interactions

- lagged responses

- Uneven data needs occasionally dense, at

different scales - Wireless networks can provide dense data, across

landscapes

3

Ecosystem models that could use wireless data

- Physiology

- PSN, respiration responses to weather, climate

- C/H2O/energy

- Atmosphere/biosphere exchange (pool sizes,

fluxes) - Biodiversity

- Differential demographic responses to

weather/climate, CO2, H2O

4

Physiological responses to weather

Precip

light, CO2

H2O, CO2

Resp

PSN

Temp

Allocation

Sap flux

Fast, fine scales

H2O, N, P

5

Sensors for ecosystem variables

Demography

Biodiversity

Physiology

Precip Pt

Evap Ej,t

Transpir Trj,t

Light Ij,t

C/H2O/energy

Soil moisture Wj,t

Temp Tj,t

VPD Vj,t

Drainage Dt

6

WisardNet a wireless network

- Multihop, self-organizing

- Sensors for light, soil air T, soil moisture,

sap flux - Tower weather station

- Minimal in-network processing

sensor

sensor

gateway

node

Self-organizing wireless connections

7

Mapped stands All life history stages Seed

rain Seed banks Seedlings Saplings Mature

trees Interventions Canopy gaps Nutrient

additions Herbivore exclosures Fire Environmental

monitoring Canopy photos Soil moisture Temperature

Wireless sensor networks Remote sensing

8

Blackwood Division, Duke Forest

sensor

sensor

node

gateway

Fluid topology

9

The goods and the bads

- The good

- Potential to collect dense data

- Adapts to changing communication potential

- The bad

- Most data uninformative, redundant, or both

- Battery life of weeks to months, depending on

transmission rate - Checking and replacing batteries is the primary

maintenance cost of network

10

the ugly

- Unreliable!

Battery life at 13 nodes

Network partially down

Failures

Junk

11

A dynamic control problem

- What is an observation worth?

- (How to quantify learning?)

- The answer recognizes

- Transmission cost of an observation

- Need to assess value in (near) real time

- Based on model(s)

- Minimal in-network computation capacity

- Use (mostly) local information

- Potential for periodic out-of-network input

12

A framework for data collection

- Predict or collect

- Transmit an observation if it could not have been

predicted by a model. - Fast decisions (real-time)

- Must rely on (mostly) local information

- Minimize transmission

13

Predictability of ecosystem data

Slow variables

Predictable variables

- Where could a model stand in for data?

Events

Less predictable

14

Which observations are informative?

Light

Shared vs unique data features (within nodes,

among nodes)

Precipitation

Exploit relationships among variables/nodes?

Soil moisture

Slow, predictable relationships?

15

Model-dependent learning

- Exploit relations in space, time, and with other

variables - Learning from previous data collection

- If predictable, data have reduced value

PAR at 3 nodes, 3 days

observations

16

Controlling measurement with models

- Inferential modeling concerns

- Some parameters local, some global

- Estimates of global parameters need transmission

- Data cant arrive faster than model converges

- Simple rules for local control of transmission

- Rely mostly on local variables

- Periodic updating from out of network

- Transmit if you cant predict

17

In network data suppression

- An acceptable error, ?

- A standard reactive model based on change,

- Alternative is the observation predictable,

zj local sensor data (no transmission) ?, z,

wt global data, periodically updated from full

model MF full, out-of-network model MI simplifie

d, in-network model

18

Out-of-network model is complex

Calibration data (sparse!)

Sensor data zj,t

Data

y,E,Tr,Dt

y,E,Tr,Dt-1

y,E,Tr,Dt1

Process

Parameters

Location effects

Process parameters

time effect ?t1

time effect ?t-1

Measurement errors

time effect ?t

Process error

heterogeneity

Hyperparameters

19

Soil moisture example

- Simulated process, parameters unknown

- Simulated data

- TDR calibration, error known (sparse),

- 5 sensors, error/drift unknown (dense, but

unreliable), - Out-of-network estimate process/parameters

- Use estimates for in-network prediction

- Transmit only when predictions exceed threshold

20

Model summary

Process

Sensor j Rand eff

TDR calibration

Inference

Back to node j

21

Simulated process data

truth y 95 CI 5 sensors z Calibration w

Colors

Red dots

Drift parameters ? Estimates and truth (dashed

lines)

22

Estimates from training phase

Process parameters ? Estimates and truth (dashed

lines)

23

Keepers based on acceptable error

Transmit obs only if

Increasing drift reduces predictive capacity

From plug-in values

Keepers (40), very few until drift accumulates

24

Better data, few observations

Known constraint on missing data

Reanalysis model for missing data

truth y 95 CI 5 sensors z Calibration w

Colors

Red dots

25

Additional variables

26

Collect or predict

- Inferential ecosystem models a currency for

learning assessment - In-network simplicity point predictions based on

local info, periodic out-of-network inputs - Out-of-network predictive distributions for all

variables (reanalysis step) - A role for inference in data collection, not just

data analysis

27

Advantages over reactive data collection in

wireless networks

- Change in a variable is not directly linked to

its information content - Example all soil moisture sensors may change at

similar rates, making them largely redundant - Predictability emphasizes change that contains

information - The capacity to predict an observation summarizes

its value and assures that it can be estimated to

known precision in the reanalysis