Digital Images PowerPoint PPT Presentation

1 / 38

Title: Digital Images

1

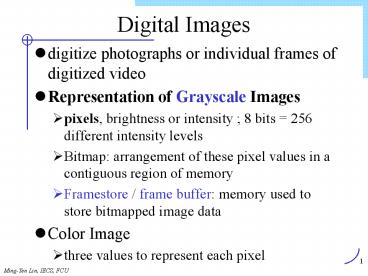

Digital Images

- digitize photographs or individual frames of

digitized video - Representation of Grayscale Images

- pixels, brightness or intensity 8 bits 256

different intensity levels - Bitmap arrangement of these pixel values in a

contiguous region of memory - Framestore / frame buffer memory used to store

bitmapped image data - Color Image

- three values to represent each pixel

2

Parameters of Digital Images

- image size

- if an original picture resolution is 300 dpi

(dots per inch), then number of pixels (samples)

per inch required should be at least 300.

Otherwise, the digital image will be distorted - pixel depth the number of bits used to represent

each pixel - amount of data D xyb

- 512 pixels by 512 lines with pixel depth 24 bits

768KB

3

Image Compression

- exploiting redundancies in images and human

perception properties - neighboring samples on a scanning line are

normally similar (spatial redundancy ) - predictive coding techniques and other techniques

such as transform coding - Human tolerate some information loss

- achieve a high compression ratio perception

sensitivities are different for different signal

patterns

4

Spatial Subsampling

- don't have to retain every original pixel to keep

the contents of the image - Encoder one pixel every few pixels is selected

and transmitted (or stored) - Decoder missing pixels are interpolated based on

the received pixels (Alternatively, display the

smaller spatially subsampled images) - If pixels are represented with luminance and

chrominance components - chrominance subsampled at a higher ratio and

quantized more coarsely

5

Predictive Coding

- sample values of spatially neighboring picture

elements are correlated - One-dimensional prediction algorithms use

correlation of adjacent picture elements within

the scan line - line-to-line and frame-to-frame correlation and

are denoted as two-dimensional and

three-dimensional prediction

6

Transform Coding

- decorrelate the image pixels convert

statistically dependent image elements into

independent coefficients - concentrate the energy of an image onto only a

few coefficients, so that the redundancy in the

image can be removed - original image is usually divided into a number

of rectangular blocks or subimages - apply unitary mathematical transform to each

subimage - transforms the subimage from the spatial domain

into a frequency domain - If the data in the spatial domain is highly

correlated, the resulting data in the frequency

domain will be in a suitable form for data

reduction with techniques such as Huffman coding

and run-length coding

7

Transform Coding (2/2)

- takes advantage of the statistical dependencies

of image elements for redundancy reduction - Karhunen-Loeve transform (KLT), the discrete

cosine transform (DCT), the Walsh-Hadamard

transform (WHT) and the discrete Fourier

transform (DFT) - KLT is the most efficient , DCT is most widely

used - practical transform coding system

- select the transform type, block size

- apply the selected transform

- select and efficiently quantize those transformed

coefficients to be retained for transmission or

storage

8

Vector Quantization(1/2)

- Shannon's rate-distortion theory better

performance can always be achieved by coding

vectors (a group of values) instead of scalars

(individual values). - vector quantizer a mapping Q of K-dimensional

Euclidean space RK into a finite subset Y of RK - Q RK?Y, Y (x'i i 1, 2, . . . . N), and x'i

is the ith vector in Y. - Y (a VQ codebook or VQ table ) is the set of

reproduction vectors - N is the number of vectors

9

Vector Quantization(2/2)

- Encoder

- each data vector x belonging to RK is matched or

approximated with a codeword in the codebook

address or index of that codeword is transmitted - decoder

- index is mapped back to the codeword codeword

is used to represent the original data vector - image block size is (n x n) pixels and each pixel

is represented by m bits, theoretically, (2m)nxn

types of blocks are possible - In practice, only a limited number of

combinations that occur most often

10

Fractal Image Coding

- A fractal

- an image of a texture or shape expressed as one

or more mathematical formulas - a geometric form whose irregular details recur at

different scales and angles, which can be

described by affine or fractal transformations

(formulas) - Fractal Geometry IBM mathematician Benoit B.

Mandelbrot, The Fractal Geometry of Nature, 1977 - Fractals have historically been used to generate

images - Fractal formulas can now be used to describe

almost all real-world pictures - Fractal image compression is the inverse of

fractal image generation - fractal image compression searches for sets of

fractals in a digitized image that describe and

represent the entire image - The codes are "rules" for reproducing the various

sets of fractals that, in turn, regenerate the

entire image - Lossy compression

- Compression is slow, decompression is fast

11

Fractal Coding

12

Wavelet Compression Practical Coding Systems

- Wavelet Compression basic principle similar to

that of DCT-based compression to transform

signals from the time domain into a new domain - Practical Coding Systems

- (a) Spatial and temporal subsampling

- (b) DPCM based on motion estimation and

compensation - (c) Two-dimensional DCT

- (d) Huffman coding

- (e) Run-length coding

13

JPEG - Still Image Compression Standard

- Joint Photographic Experts Group

- continuous-tone (multilevel) still images

- grayscale and color

- handle full-motion video the application is

called motion JPEG or MJPEG - four modes of operation

- Lossy sequential DCT-based encoding

- Expanded lossy DCT-based encoding

- Lossless encoding

- Hierarchical encoding

14

4 modes

- Lossy sequential DCT-based encoding

- each image component is encoded

- in a single left-to-right, top-to-bottom scan

- called baseline mode

- Expanded lossy DCT-based encoding

- progressive coding, in which the image is encoded

in multiple scans to produce a quick, rough

decoded image when the transmission bandwidth is

low. - Lossless encoding exact reproduction

- Hierarchical encoding encoded in multiple

resolutions

15

Baseline mode (1/4)

- source image data

- normally transformed into luminance component Y

and two chrominance components U and V - U and V are normally spatially-sampled in both

the horizontal and vertical direction by a factor

of 2 - take advantage of the lower sensitivity of human

perception to the chrominance signals - original sample values are in the range 0,

2b-1, assuming b bits are used for each sample - values are shifted into the range -2b-1,

2b-1-1, centering on zero - to allow a low-precision calculation in the DCT

- For baseline, 8 bits are used for each sample

- the original value range of 0, 255 is shifted

to -128, 127.

16

Baseline mode (2/4)

- Then, each component is divided into blocks of 8

x 8 pixels - A two-dimensional forward DCT (FDCT) is applied

to each block of data. - Result an array of 64 coefficients with energy

concentrated in the first few coefficients - quantized these coefficients, using quantization

values specified in the quantization tables - the quantization values can be adjusted to trade

between compression ratio and compressed image

quality - Quantization is carried out by dividing the DCT

coefficients by the corresponding specified

quantization value

17

Baseline mode (3/4)

- The quantized coefficients are zigzag scanned to

obtain a one-dimensional sequence of data for

Huffman coding - The first coefficient is called the DC

coefficient, which represents the average

intensity of the block - The rest of the coefficients (1 to 63) are called

AC coefficients - zigzag scanning is to order the coefficients in

increasing order of spectral frequencies - the coefficients of high frequencies are mostly

zero, the zigzag scanning will result in mostly

zeros at the end of the scan - leading to higher Huffman and run-length coding

efficiency.

18

Baseline mode (4/4)

- The DC coefficient is DPCM coded relative to the

DC coefficient of the previous block. - AC coefficients are run-length coded.

- DPCM-coded DC coefficients and run-length-coded

AC coefficients are then Huffman coded. - The output of the entropy coder is the compressed

image data.

19

Sequential DCT-based encoding

20

JBIG

- an ISO standard (Joint Bi-level Image experts

Group) - The intent of JBIG is to replace the current,

less effective group 3 and group 4 fax algorithms - a lossless compression algorithm for binary (one

bit/ pixel) images. - JBIG compression

- based on a combination of predictive and

arithmetic coding - used on grayscale or even color images for

lossless compression - simply applying the algorithm 1 bit plane at a

time

21

Arithmetic Coding (I)

- Probability

- , A, B, E, G, I 0.1

- L 0.2

- S, T 0.1

- Range

- 0.0, 0.1), A0.1, 0.2), , I0.5,

0.6) - L0.6, 0.8), S0.8, 0.9), T0.9, 1.0)

- Coding BILL GATES

22

Arithmetic Coding (I)

- initial low0.0, high 1.0

- B - 0.2, 0.3), low 0.2, high 0.3

- I -0.5, 0.6), low 0.25, high 0.26

- (0.3-0.2) 0.5 0.05 (0.3-0.2)0.6 0.06

- L -0.6, 0.8), low 0.256 , high 0.258

- (0.26-0.25)0.6 .006, (.26-.25).8.008

- L -0.6, 0.8), low 0.2572 , high 0.2576

- .002.6 .0012, .002.8.0016

23

Arithmetic Coding (II)

- -0.0, 0.1), low 0.25720 , high 0.25724

- .00040.0 0, .00040.1.00004

- G .257216, .257220

- A .2572164, .2572168

- T .25721676, .2572168

- E .257216772, .257216776

- S .2572167752, .2572167756

24

Decode msg .2572167752

- reverse operation

- within 0.2, 0.3) B - 0.2, 0.3)

- B, (msg-0.2)/(0.3-0.2) .572167752 (msg)

- I -0.5, 0.6), (msg-0.5)/(0.6-0.5).72167752

- L-0.6, 0.8), (.72167752-.6)/(.8-.6).6083876

- 0.8

- S-0.8,0.9), (0.8-0.8)/(0.9-0.8) 0

- END

- chose a binary close to low

25

JPEG-2000 (1/2)

- provide a lower bit-rate mode of operation than

the existing JPEG and JBIG standards - replace and provide better rate distortion and

subjective image quality - handle images larger than 64K x 64K pixels

without having to use tiling - use a single decompression algorithm for all

images to avoid the problems of mode

incompatibility (sometimes encountered with

existing standards) - designed with transmission in noisy environments

in mind - also efficient to compress computer-generated

images - handle the mix of bilevel and continuous tone

images required for interchanging and/or storing

compound documents as part of document imaging

systems - in both lossless and lossy formats

26

JPEG-2000 (2/2)

- allow progressive transmission of images

- allow images to be reconstructed with increasing

pixel accuracy and spatial resolution. - transmittable in real-time over low bandwidth

channels to devices with limited memory space

(e.g., a printer buffer). - allow specific areas of an image to be compressed

with less distortion than others - provide

- facilities for content-based description of the

images - mechanisms for watermarking, labeling, stamping,

and encrypting images. - still images are compatible with MPEG-4 moving

images - Using Wavelet compression

27

Digital Video Representation (1/3)

- A digital video sequence consists of a number of

frames or images that have to be played out at a

fixed rate. - The frame rate of a motion video is determined by

three major factors. - high enough to deliver motion smoothly.

- most motion can be delivered smoothly frame rate

25 frames per second - the higher the frame rate, the higher the

transmit bandwidth - high frame rate, more images (frames) must be

transmitted in the same amount of time, so higher

bandwidth is required. - So bandwidth at least 25 frames per second to

deliver most scenes smoothly

28

Digital Video Representation (2/3)

- 3. when the phosphors in the display devices are

hit by an electron beam, they emit light for a

short time, typically for a few milliseconds. - phosphors do not emit more light unless they are

hit again by the electron beam. - the image on the display disappears if it is not

redisplayed (refreshed) after this short period. - If the refresh interval is too large, the display

has an annoying flickering appearance - refreshed at least 50 times per second to prevent

flicker - bandwidth!! - Use interlace techniquethe same amount of

bandwidth is used but flicker is avoided to a

large extent - more than one vertical scan is used to reproduce

a complete frame. - Each vertical scan is called a field.

- Broadcast television uses a 21 interlace - 2

vertical scan (fields) for a complete frame. - one vertical scan (called the odd field) displays

all the odd lines of a frame, - a second vertical scan (called the even field)

displays the even lines.

29

D.V. Representation (3/3)

- 25 frames per second, the field rate is 50

fields per second - 25 frames (50 fields) per second rate is used in

television systems (PAL) in European countries - China, and Australia. The 30 frames (60 fields)

per second rate is used in the television systems

(NTSC) of North America and Japan - field numbers 50 and 60 were chosen to match the

frequencies of electricity power distribution

networks - two major characteristics of video

- a time dimension

- a huge amount of data to represent

- a 10-minute video sequence with image size 512

pixels by 512 lines, pixel depth of 24 bits/pixel

and frame rate 30 frames/s 600305125123

13.8 GB storage - So, video compression is essential.

30

Video Compression

- by reducing redundancies and exploiting human

perception properties - it also has spatial redundancies

- neighboring images in a video sequence are

normally similar. (called temporal redundancy) - removed by applying predictive coding between

images - reduce temporal redundanciesMotion Estimation

and Compensation - each picture is divided into fixed-size blocks.

- A closest match for each blockis found in the

previous picture

31

Motion Estimation and Compensation

- The position displacement between these two

blocks is called the motion vector - A difference block is obtained by calculating

pixel-by-pixel differences - The motion vector and pixel difference block are

then encoded and transmitted

32

MPEG (1/2)

- MPEG was established in 1988 in the framework of

the Joint ISO/IEC Technical Committee (JTC 1) on

Information Technology. - develop standards for coded representation of

moving pictures, associated audio, and their

combination when used for storage and retrieval

on digital storage media (DSM). - The DSM concept includes conventional storage

devices, such as CD-ROMs, tape drives, Winchester

disks, writable optical drives, as well as

telecommunication channels such as ISDNs and

local area networks (LANs). - MPEG-4 work item was proposed in May 1991 and

approved in July 1993

33

MPEG (2/2)

- The group had three original work items

nicknamed MPEG-1, MPEG-2, and MPEG-3 - coding of moving pictures and associated audio up

to 1.5, 10, and 40 Mbps. - MPEG-1 VHS-quality video (360 x 280 pixels at 30

pictures per second) at the bit rate around 1.5

Mbps. The rate of 1.5 Mbits was chosen because

the throughput of CD-ROM drives at that time was

about that rate. - MPEG-2 was to code CCIR 601 digital-television-qua

lity video (720 x 480 pixels at 30 frames per

second) at a bit rate between 2 to 10 Mbps. - MPEG-3 was to code HDTV-quality video at a bit

rate around 40 Mbps. Later on, it was realized

that functionality supported by the MPEG-2

requirements covers MPEG-3 requirements, thus the

MPEG-3 work item was dropped in July 1992.

34

MPEG Parts

- three main parts

- MPEG-Videocompression of video signals

- MPEG-Audioconcerned with the compression of a

digital audio signal - MPEG-Systems deals with the issue of

synchronization and multiplexing of multiple

compressed audio and video bitstreams - a fourth part called Conformance

- specifies the procedures for determining the

characteristics of coded bitstreams and for

testing compliance with the requirements stated

in Systems, Video, and Audio. - only specify the syntax of coded bitstreams

- so that decoders conforming to these standards

can decode the bitstream. - The standards do not specify how to generate the

bitstream. - This allows innovation in designing and

implementing encoders

35

Standards for Composite Multimedia Documents

- SGML (Standard Generalized Markup Language)

- std. syntax for defining Document Type

Definitions of classes of structured info. - HTML is a class of DTD of SGML

- Open Document Arch. logical layout components

of documents, interchange format - Acrobat Portable Document Format

- Hypermedia/Time-Based Structuring Language

(HyTime) represent hypermedia doc. using SGML

interrelation of doc. components according to any

quantifiable dimension - MHEG OO model for MM interchange

36

Major Characteristics and Requirements of

Multimedia Data and Applications

- Storage and Bandwidth Requirements

- Semantic Structure of Multimedia Information

- Delay and Delay Jitter Requirements

- Temporal and Spatial Relationships among Related

Media - Subjectiveness and Fuzziness of the Meaning of

Multimedia Data

37

Major Characteristics and Requirements (2/3)

- Storage and Bandwidth Requirements

- Measure

- storage bytes, MB

- bandwidth bps, Mbps

- images (storage) XYdepth (B, MB)

- audio,video (bandwidth) bps, Mbsp

- audio sampling rate bits/sample

- video frame-unit

- Semantic Structure of Multimedia Information

- no obvious structure (vs. alphanumeric)

- features or contents have to be extracted from

raw MM data

38

Major Characteristics and Requirements (3/3)

- Delay and Delay Jitter Requirements

- time-dependent continuous media

- MIRSs are interactive (delay, response time)

- Temporal and Spatial Relationships among Related

Media - retrieval transmission of media be coordinated

w.r.t. temporal relationship - synchronization

- 1)mechanisms/tools for users to specify

- 2)to guarantee overcome indeterministic nature

of comm.