Pearson Product Moment Coefficient of Correlation: - PowerPoint PPT Presentation

Title:

Pearson Product Moment Coefficient of Correlation:

Description:

In general, when a sample of n individuals or experimental units is selected and ... Since the numerator of both quantities is Sxy, both r and b have the same sign. ... – PowerPoint PPT presentation

Number of Views:2069

Avg rating:3.0/5.0

Title: Pearson Product Moment Coefficient of Correlation:

1

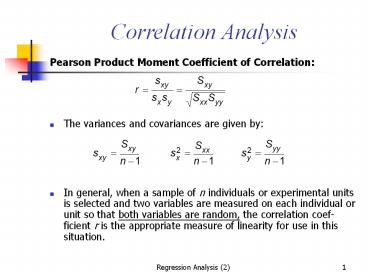

Correlation Analysis

- Pearson Product Moment Coefficient of

Correlation - The variances and covariances are given by

- In general, when a sample of n individuals or

experimental units is selected and two variables

are measured on each individual or unit so that

both variables are random, the correlation

coef-ficient r is the appropriate measure of

linearity for use in this situation.

2

Example The heights and weights of n 10

offensive backfield football players are randomly

selected from a countys football all-stars.

Calculate the correlation coefficient for the

heights (in inches) and weights (in pounds) given

in Table below.

- Table Heights and weights of n 10 backfield

all-stars - Player Height x

Weight y - 1 73 185

- 2 71 175

- 3 75 200

- 4 72 210

- 5 72 190

- 6 75 195

- 7 67 150

- 8 69 170

- 9 71 180

- 10 69 175

3

- Solution

- You should use the appropriate data entry method

of your scientific calculator to verify the

calculations for the sums of squares and

cross-products - using the calculational formulas given earlier

in this chapter. Then - or r .83. This value of r is fairly close to

1, the largest possible value of r , which

indicates a fairly strong positive linear

relationship between height and weight.

4

- There is a direct relationship between the

calculation formulas for the correlation

coefficient r and the slope of the regression

line b. - Since the numerator of both quantities is Sxy,

both r and b have the same sign. - Therefore, the correlation coefficient has these

general properties - - When r 0, the slope is 0, and there is no

linear relationship between x and y. - - When r is positive, so is b, and there is a

positive relationship between x and y. - - When r is negative, so is b, and there is a

negative relationship between x and y.

5

The relationship between r (correlation

coefficient) and the regression model

6

Figure Some typical scatter plots

7

- The population correlation coefficient r is

calculated and interpreted as it is in the

sample. - The experimenter can test the hypothesis that

there is no correlation between the variables x

and y using a test statistic that is exactly

equivalent to the test of the slope b in previous

Section.

8

- Test of Hypothesis Concerning the correlation

Coefficient r - 1. Null hypothesis H 0 r 0

- 2. Alternative hypothesis

- One-Tailed Test Two-Tailed Test

- H a r gt 0 H a r ¹ 0

- (or H a r lt 0)

- 3. Test statistic

- When the assumptions are satisfied, the test

statistic will have a Students t distribution

with (n - 2) degrees of freedom.

9

When comparing to non-zero constant

- 1. Null hypothesis H 0 r r0

- 2. Alternative hypothesis

- One-Tailed Test Two-Tailed Test

- H a r gt r0 H a r ¹ r0

- (or H a r lt r0)

- 3. Test statistic

- When the assumptions are satisfied, the test

statistic will have a Students t distribution

with (n - 2) degrees of freedom.

10

- 4. Rejection region Reject H 0 when

- One-Tailed Test Two-Tailed Test

- t gt ta,n-2

t gt ta/2, n-2 or t lt - ta/2,

n-2 (or t lt -ta, n-2 when the alternative

hypothesis is H a r lt 0 or H a r lt r0) - or p-value lt a

11

- Example Refer to the height and weight data in

the previous Example The correlation of height

and weight was calculated to be r .8261. Is this

correlation significantly different from 0?

Solution To test the hypotheses the value of

the test statistic is which for n 10 has a

t distribution with 8 degrees of freedom. Since

this value is greater than t.005 3.355, the

two-tailed p-value is less than 2(.005) .01,

and the correlation is declared significant at

the 1 level (P lt .01). The value r 2 .82612

.6824 means that about 68 of the variation in

one of the variables is explained by the other.

The Minitab printout n Figure 12.17 displays the

correlation r and the exact p-value for testing

its significance.

12

- r is a measure of linear correlation and x and y

could be perfectly related by some curvilinear

function when the observed value of r is equal to

0.

13

Testing for Goodness of Fit

- In general, we do not know the underlying

distribution of the population, and we wish to

test the hypothesis that a particular

distribution will be satisfactory as a population

model. - Probability Plotting can only be used for

examining whether a population is normal

distributed. - Histogram Plotting and others can only be used to

guess the possible underlying distribution type.

14

Goodness-of-Fit Test (I)

- A random sample of size n from a population whose

probability distribution is unknown. - These n observations are arranged in a frequency

histogram, having k bins or class intervals. - Let Oi be the observed frequency in the ith class

interval, and Ei be the expected frequency in the

ith class interval from the hypothesized

probability distribution, the test statistics is

15

Goodness-of-Fit Test (II)

- If the population follows the hypothesized

distribution, X02 has approximately a chi-square

distribution with k-p-1 d.f., where p represents

the number of parameters of the hypothesized

distribution estimated by sample statistics. - That is,

- Reject the hypothesis if

16

Goodness-of-Fit Test (III)

- Class intervals are not required to be equal

width. - The minimum value of expected frequency can not

be to small. 3, 4, and 5 are ideal minimum

values. - When the minimum value of expected frequency is

too small, we can combine this class interval

with its neighborhood class intervals. In this

case, k would be reduced by one.

17

Example 8-18 The number of defects in printed

circuit boards is hypothesized to follow a

Poisson distribution. A random sample of size 60

printed boards has been collected, and the number

of defects observed as the table below

- The only parameter in Poisson distribution is l,

can be estimated by the sample mean 0(32)

1(15) 2(19) 3(4)/60 0.75. Therefore, the

expected frequency is

18

Example 8-18 (Cont.)

- Since the expected frequency in the last cell is

less than 3, we combine the last two cells

19

Example 8-18 (Cont.)

- 1. The variable of interest is the form of

distribution of defects in printed circuit

boards. - 2. H0 The form of distribution of defects is

Poisson - H1 The form of distribution of defects is not

Poisson - 3. k 3, p 1, k-p-1 1 d.f.

- 4. At a 0.05, we reject H0 if X20 gt X20.05, 1

3.84 - 5. The test statistics is

- 6. Since X20 2.94 lt X20.05, 1 3.84, we are

unable to reject the null hypothesis that the

distribution of defects in printed circuit boards

is Poisson.

20

Contingency Table Tests

- Example 8-20

- A company has to choose among three pension

plans. Management wishes to know whether the

preference for plans is independent of job

classification and wants to use a 0.05. The

opinions of a random sample of 500 employees are

shown in Table 8-4.

21

Contingency Table Test- The Problem Formulation

(I)

- There are two classifications, one has r levels

and the other has c levels. (3 pension plans and

2 type of workers) - Want to know whether two methods of

classification are statistically independent.

(whether the preference of pension plans is

independent of job classification) - The table

22

Contingency Table Test- The Problem Formulation

(II)

- Let pij be the probability that a random selected

element falls in the ijth cell, given that the

two classifications are independent. Then pij

uivj, where the estimator for ui and vj are - Therefore, the expected frequency of each cell is

- Then, for large n, the statistic

- has an approximate chi-square distribution with

(r-1)(c-1) d.f.

23

Example 8-20

24

(No Transcript)

25

Key Concepts and Formulas

- I. A Linear Probabilistic Model

- 1. When the data exhibit a linear relationship,

the appropriate model is y a b x e . - 2. The random error e has a normal distribution

with mean 0 and variance s 2. - II. Method of Least Squares

- 1. Estimates a and b, for a and b, are chosen to

minimize SSE, The sum of the squared deviations

about the regression line,

26

- 2. The least squares estimates are b Sxy / Sxx

and - III. Analysis of Variance

- 1. Total SS SSR SSE, where Total SS Syy

and SSR (Sxy)2 / Sxx. - 2. The best estimate of s 2 is MSE SSE / (n -

2). - IV. Testing, Estimation, and Prediction

- 1. A test for the significance of the linear

regressionH0 b 0can be implemented using

one of the two test statistics

27

- 2. The strength of the relationship between x

and y can be measured using - which gets closer to 1 as the relationship gets

stronger. - 3. Use residual plots to check for nonnormality,

inequality of variances, and an incorrectly fit

model. - 4. Confidence intervals can be constructed to

estimate the intercept a and slope b of the

regression line and to estimate the average

value of y, E( y ), for a given value of x. - 5. Prediction intervals can be constructed to

predict a particular observation, y, for a

given value of x. For a given x, prediction

intervals are always wider than confidence

intervals.

28

- V. Correlation Analysis

- 1. Use the correlation coefficient to measure

the relationship between x and y when both

variables are random - 2. The sign of r indicates the direction of the

relationship r near 0 indicates no linear

relationship, and r near 1 or -1 indicates a

strong linear relationship. - 3. A test of the significance of the correlation

coefficient is identical to the test of the

slope b.

29

Cause and Effect

- X could cause Y

- Y could cause X

- X and Y could cause each other

- X and Y could be caused by a third variable Z

- X and Y could be related by chance

- Bad (or good) luck

- Need careful examination of the study. Try to

find previous evidences or academic explanations.